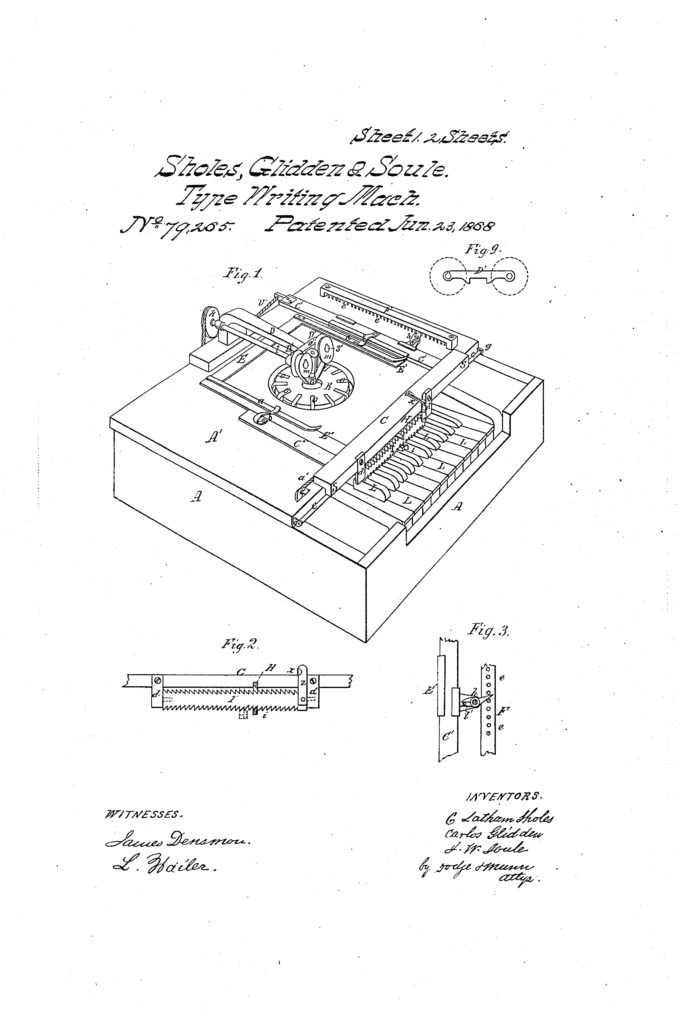

On June 23. 1868, the first typewriter patent was awarded. If someone placed an original 1868 typewriter in front of you, you might not be able to figure out what it was. With keys that looked more like they belonged on a piano keyboard, the original typewriters looked very little like the manual typewriters you might happen upon today. In its day, the introduction of the typewriter into American businesses may have been just as revolutionary as the introduction of the computer was a few decades ago.

There were many inventors who were looking at machines that could type. In the US, one of the first commercially made typewriters was patented in 1868 by Christopher Latham Sholes, Carlos Glidden, and Samuel W. Soule. As told by the Library of Congress, “The 1868 patent was sold to E. Remington & Sons (then known for manufacturing sewing machines), who began production on March 1, 1873, under the name Sholes and Glidden Type-Writer. This model eventually became the Remington Typewriter and it is this machine that popularized the QWERTY layout we are still using on our computer keyboards.”

There were many inventors who were looking at machines that could type. In the US, one of the first commercially made typewriters was patented in 1868 by Christopher Latham Sholes, Carlos Glidden, and Samuel W. Soule. As told by the Library of Congress, “The 1868 patent was sold to E. Remington & Sons (then known for manufacturing sewing machines), who began production on March 1, 1873, under the name Sholes and Glidden Type-Writer. This model eventually became the Remington Typewriter and it is this machine that popularized the QWERTY layout we are still using on our computer keyboards.”

The invention of the typewriter led to the keyboards on the computers of today. To foster learning on this topic with your students, show them a computer and a typewriter (or two, if you can find significantly different models, such as a manual typewriter and an electric one.)

Begin an inquiry-based study that compares typewriters to computers. Students can talk about everything from the appearance of the two tools, to the means by which a person gets the final, finished product (a piece of paper with alphanumeric figures on it), to the different ways that they might use the two machines if they were composing a paper.

As a conclusion to the project, ask students to hypothesize about how the shift from typewriters to computers changes the way that work is done. Challenge them to dream what similar invention might be next!

Curious about the NCTE and Library of Congress connection? Through a grant announced recently by NCTE Executive Director Emily Kirkpatrick, NCTE is engaged in new ongoing work with the Library of Congress, and “will connect the ELA community with the Library of Congress to expand the use of primary sources in teaching.” Stay tuned for more throughout the year!

It is the policy of NCTE in all publications, including the Literacy & NCTE blog, to provide a forum for the open discussion of ideas concerning the content and the teaching of English and the language arts. Publicity accorded to any particular point of view does not imply endorsement by the Executive Committee, the Board of Directors, or the membership at large, except in announcements of policy, where such endorsement is clearly specified.